Interactive Smart City Display Explanation

For my computer science capstone project, I created an IoT installation with the goal of making smart technology tangible and less intimidating to the public, for the Innovation Office at the City of Syracuse, (a small, start-up-like office in City government).

Read the making of here.

Code repository is here.

Video of openFrameworks app running:

Turn the sound on for tones generated at certain distance stages. openFameworks app controlling LEDs via OPC and FadeCandy on Raspberry Pi.

openFrameworks application running on the Raspberry Pi.

Video of the website receiving data:

This was a Bootstrap website running on a NodeJS server on Heroku, that used SocketIO to receive data.

Posters that I made:

Arduino: this is a "microcontroller". It is a device that is told to do one thing and they do it forever. They are similar to how your cell phone works but instead of being able to run multiple apps at once, they can only run 1 app.

FadeCandy: this tells the light strip which individual lights to light up and what color. It's like a remote for the lights but controlled from the computer.

Distance Sensor: this allows the Arduino to tell how close you are. This is like motion detectors on garage lights or automatic doors at your favorite retail store.

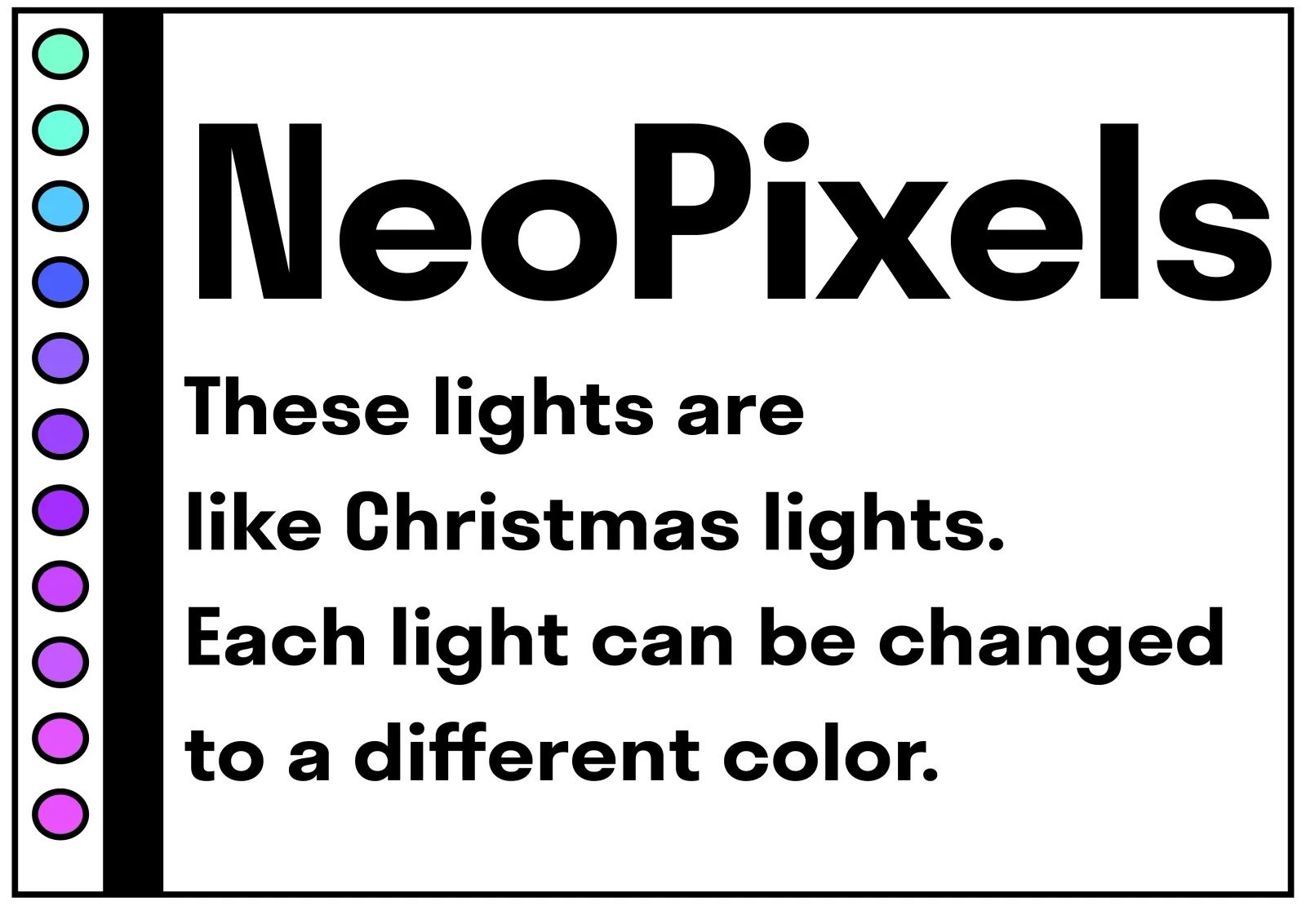

NeoPixels: These lights are like Christmas lights. Each light can be changed to a different color.

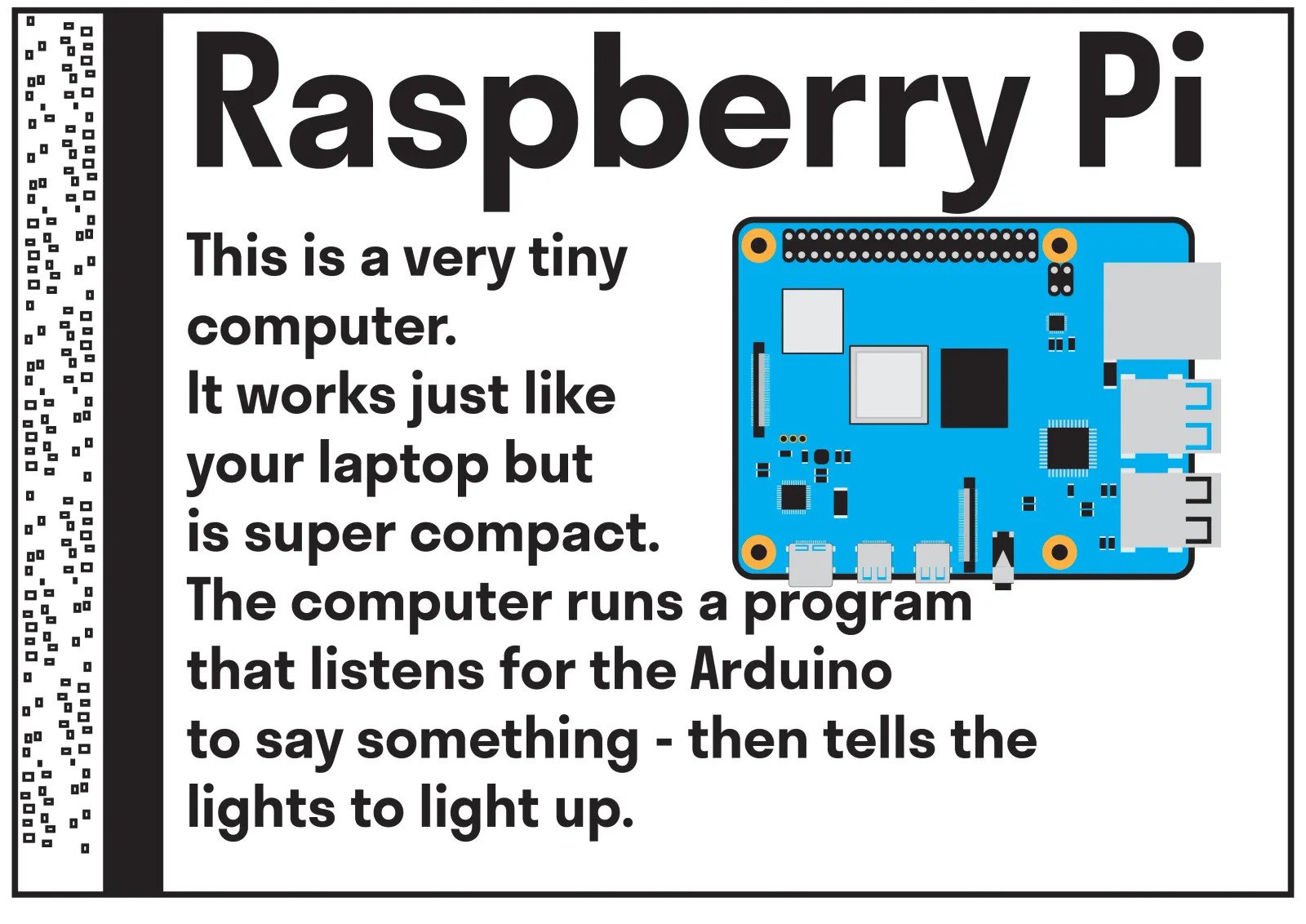

Raspberry Pi: this is a very tiny computer. It works just like your laptop but is super compact. The computer runs a program that listens for the Arduino to say something - then tell the lights to light up.